Cold Data: Use It! Don't Lose It

CIO’s Reduce Data Storage Costs by 70%

As a CIO or senior executive responsible for Infrastructure and Operations (I&O), two of the highest priorities are to manage costs while adding value to the corporation. Achieving success, given these often-conflicting priorities, can be a challenge. Imagine if you could reduce your data storage budget by 70% or more while giving the corporation access to an existing valuable asset which they could not previously use or monetize. This can be achieved through valid identification and tiering of cold data to cloud or commodity storage.

Cold Data Characteristics

Upon its creation, unstructured data always starts as hot. Hot data may be accessed at any time by users and their applications with the expectation of reliable performance. But unstructured data has two defining properties; it cools very rapidly and, as it ages, the probability of it being accessed drops dramatically. In fact, until recently and based on our research, unstructured data with no access within the last 90 days had a minimal chance of being used again. 75 to 90 percent of unstructured data is cold.[1]

Unstructured data typically accounts for 80% of corporate data[2], and it is growing at a compounded annual growth rate between 55% and 65%[3]. For many organizations, this is too much data with too much churn to manage cost-effectively. Cold data is managed and stored as if it were hot data. It resides on primary file servers, which are optimized for performance, with higher operations costs and are therefore always the more expensive storage configuration. CIOs can reduce data storage costs by 70% with new cold data storage and management solutions. Hot data belongs on primary storage systems. Cold data does not.

It is a waste of expensive resources to keep cold data on primary file systems. Imagine decreasing your data storage costs by 70%.

The Growing Value of Cold Data

However, cold data is becoming more valuable. Over the last five years, the elastic availability of computational resources has boomed with the growth of virtualization, containerization and the mainstream onset of cloud computing. With ubiquitous computational capability comes the ability to process and analyze much more data than ever before. IDC has identified the global datasphere will grow to 175 zettabytes by 2025[4]. Artificial Intelligence (AI), Machine Learning (ML), Internet of Things (IoT), genomic analytics, autonomous vehicles, seismological studies, real time meteorological analytics and complex Business Intelligence (BI) models are just a few examples of applications which produce and consume more and more cold data. Because of Smart Filer’s greater capability to access data, comes an increased demand to analyze the cold data - giving that data a new surge in value. This value is realized only in-house or monetized externally by the bulk leasing of data for the insights it contains. For example, once a genome has been mapped, it is more effective and productive for others to leverage that data for their research, rather than regenerating it again from first principles. Whether internal or external, this bulk, often cold data, only has value if it is readily available.

Organizations must keep a variety of logs, records, reports and other data as required by their regulators. Such regulatory or compliance data is most often cold, sitting idle and unchanged for long periods. However, when the regulators request this data, it must be readily accessible. Organizations that rely on older archiving technologies to keep their historical compliance data have genuine concerns that some of their content may not be readily accessible, if at all. Not having this data or even having it where it takes too long to recover can cost companies a significant expense for non-compliance.

Historically, all old data (not necessarily just cold data) was archived; relegated to dedicated magnetic media vault systems, optical discs or magnetic tape systems. These were low-cost mechanisms for maintaining the data for long periods. These systems were acceptable when the reasons for keeping the older data were to satisfy traditional regulatory and data governance requirements and when data recovery times were measured in days and weeks. For cold data to achieve its value to the corporation, the historical archive mechanisms are no longer acceptable. Data with restoration times measured in days and weeks is virtually unusable and is effectively lost. These archival solutions are also no longer the lowest cost options for long term storage and dependability. The new standard for the balance of the most economical, highest reliability and availability archives is cloud storage, be it private or public.

However, cloud storage alone does not fully meet the criteria for data value. For cold data to attain its value, it must be usable. To be usable, it must be reachable and recoverable. Therefore, transparency and the seamlessness of data tiering are critical. For unstructured data to be genuinely usable, it must be accessible in the same manner, whether hot or cold.

Cold Data Challenge

As discussed above, the issue with cold data is, the high cost of keeping idle data in primary storage infrastructure which is designed for active data. The IT organization directly addressing this issue faces two challenges:

- Being able to identify and offload cold data in an ever-growing set of unstructured data.

- Being able to realize the value of offloaded cold data by making it usable, specifically readily available and recoverable on-demand, in a timely fashion, through the same mechanisms users and applications use to access hot data.

Leonovus Inc. has an easy to use solution which solves both challenges at once.

Leonovus Smart Filer is an Information Lifecycle Management (ILM) solution that makes cold data management easy and cost-effective. It is software that enables an organization to look at each of its file shares and generate at high speed an inventory of its data, identifying the cold data within each share. Smart Filer’s data analytics and policy-based management then provide the mechanisms to automatically and continuously offload cold data without changing the nature of user or application interactions with it. Both offloading and recovery are seamless, transparent and efficient, moving cold data to lower-cost storage locations while keeping it accessible and usable.

70% Cost Reduction Through Cold Data Management

As an example, consider a company that has 100 TB of primary file storage configured with high-speed SSD drives and available corporately over SMB (Server Message Block protocol) shares. Such equipment could have a three-year total cost of ownership on the order of approximately $91 per TB per month, which amounts to $328,000[5]. The company accepts the price of this file server because the performance it offers enables its employees to work quickly and effectively. Unfortunately, in this example, the server has reached capacity at 95% utilization and can effectively store no more data. At current growth rates, the company expects they need to double their current capacity to over 200 TB, over the next three years. The company has a dilemma, buy more expensive SSD hardware or lose data through deletion. The problem is hard because the team managing the storage does not have the visibility to know which data to keep and which can be deleted. Because they are busy in their jobs, users have neither the time nor the abilities to aid in this task.

In search of a solution, the storage team turns to Leonovus Smart Filer. Within minutes, Smart Filer is installed and configured to mount file shares. It starts generating an inventory report which categorizes the files based on the last access date and type. The storage team configures Smart Filer policy to migrate cold files that have not been accessed in more than 90 days, or other corporate policy, automatically to less expensive secondary storage, whether commodity storage or cloud object storage. The company now frees up its expensive SSD resource for hot data, and the cold files appear to still reside on the primary file server, even though they are now in secondary storage. Users access the cold files exactly as they did before, through the primary file server.

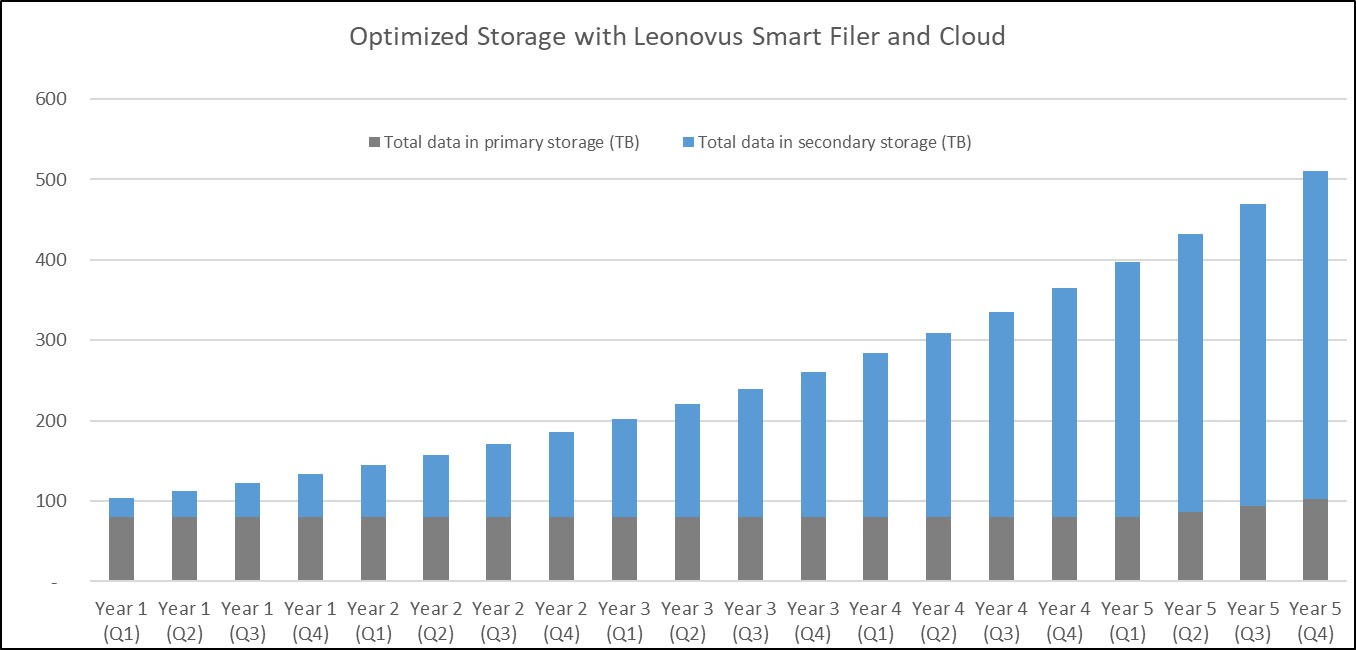

As described above, the company currently has 100 TB of storage and expects it will need at least 200 TB of storage in the next 3 years. Using Smart Filer, the company can continue to use its existing 100 TB primary storage infrastructure and establish a policy to offload cold data. For the sake of this analysis, let’s assume that they track with global averages, and roughly 80% of their data is cold. To ensure they have operating headroom in their primary storage, they plan to reduce its utilization from 95% to 80%. This will give them room to allow for situations where they have spikes in active/hot data. The result is, after three years, the company will have 80% utilization on its 100 TB primary storage. The remaining 120+ TB will be cold and will have been automatically offloaded by Smart Filer to much cheaper cloud storage. This balance between primary and secondary storage distribution is illustrated below.

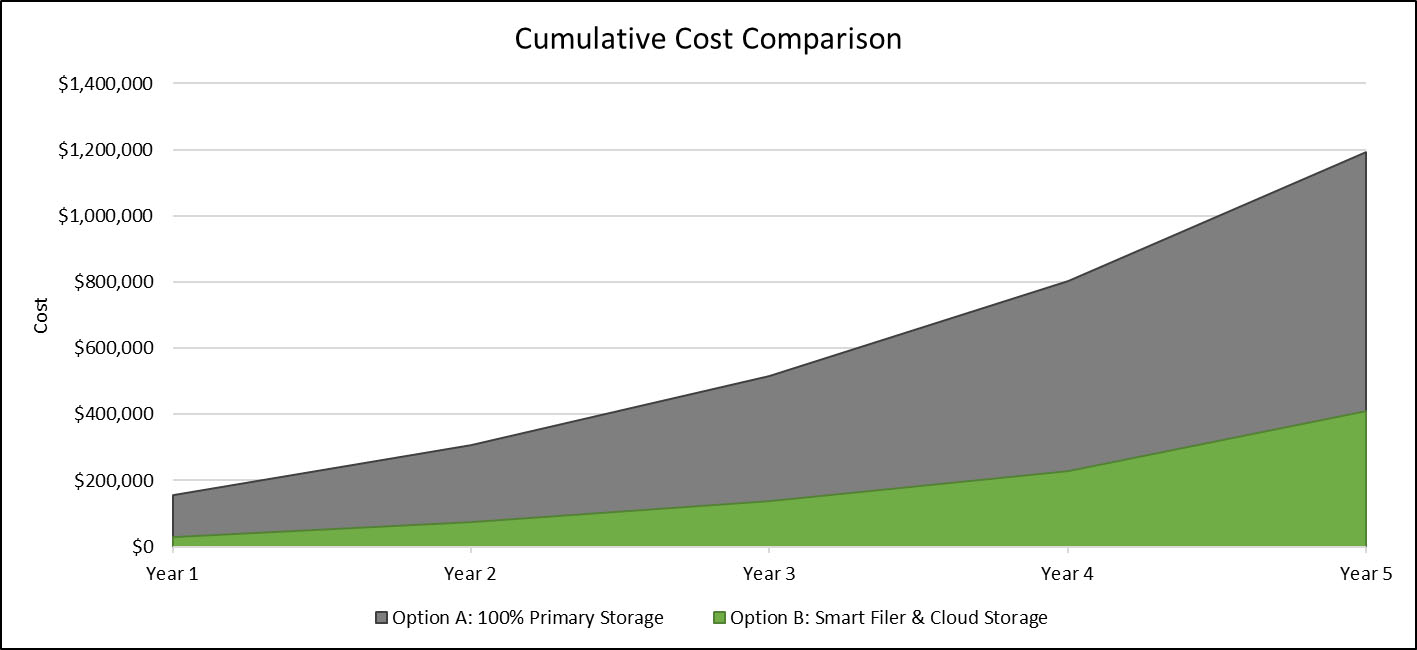

By using Smart Filer, the company achieves its strategic storage goals while only spending $30,000 on storage in the first year. If they had gone the route of purchasing new primary storage capacity, the first year would have had a cost of $154,000. Using Smart Filer reduced their unstructured storage costs by over 80%. As illustrated in this Cumulate Cost Comparison curve, the use of Smart Filer continues these savings through the full three-year cost of ownership, which saves the organization over 70% in its storage costs as compared to the costs of acquiring and operating additional primary storage.

The benefits of Smart Filer are without users being aware or changing how they work. Similarly, the storage team uses its existing resources and skills without increasing their own workload or operating costs.

Off-loaded cold data is always accessible and usable. For example, if a GDPR compliance request requires data activity logs that have gone cold, the log files will be found where they were initially created and stored. While there may be a brief delay in recovery (measured in seconds), they are opened and edited as if they were still hot.

Conclusion: 70% Costs Savings and Other Value with Cold Data Management

In summary, 80% or more of a corporation’s unstructured data, on average, can be off-loaded from primary storage to low-cost secondary storage or hybrid cloud storage, freeing up valuable resources. This off-loading must be efficient to benefit from these offload savings. Having a solution that is hands-free and automatic, requiring minimal effort from administrators, and having no impact on users and applications is essential.

Indeed, when users and applications still access the off-loaded cold data in the same manner as when it was hot, that data is readily available and can be deemed usable. Usable cold data has a real monetary value to the organization. With such a solution in place, the CIO achieves the dream of saving the company money and adding value at the same time. Leonovus Smart Filer makes this dream a reality.

For more information on Smart Filer see: www.leonovus.com/products/smart-filer.

[1] Source: TechTarget – SearchStorage 2017, Staimer, Marc; https://searchstorage.techtarget.com/feature/No-data-left-behind-Demand-for-cold-data-storage-heats-up

[2] Source: TechRepublic 2017, Shacklett, Mary; https://www.techrepublic.com/article/unstructured-data-the-smart-persons-guide/

[3] Source: Datamation 2018, Taylor, Christine; https://www.datamation.com/big-data/structured-vs-unstructured-data.html

[4] Source: IDC Data Age 2025 report, sponsored by Seagate; https://www.networkworld.com/article/3325397/idc-expect-175-zettabytes-of-data-worldwide-by-2025.html

[5] Estimated three-year total cost of ownership for an SSD-based NAS running on-premises in a data protecting RAID configuration based on corporate averaged data compiled by Amazon Web Services Inc.